Learning Objectives

- Distinguish between strong and weak arguments or inferences.

- Outline the general scientific method.

- Identify the critical elements of strong inference as a way of knowing.

- Identify and describe the roles of basic elements of experimental design: dependent and independent variables, positive and negative controls.

Inductive and deductive reasoning

What is the difference between strong and weak arguments or inferences? Is that the same as valid or invalid arguments? See this video for a good explanation:

The first example in the video, that begins with all humans have DNA, and concludes that Pat has DNA, is an exercise in deductive reasoning. Logically correct deductive reasoning leads to valid arguments or conclusions. However, much of science is dedicated to constructing generalities or models from specific examples, and dealing with uncertainties. This takes inductive reasoning, reaching conclusions based on evidence, and distinguishing between strong and weak arguments. Scientific investigations use both deductive and inductive reasoning.

Scientific Method and Strong Inference

Why are some scientists more successful than others? Is it just luck, or that some problems are just more difficult than others, or that some scientists are smarter or know more or work harder? Platt, who coined the term “strong inference,” thinks it is a method of systematic scientific thinking (Platt, 1964). Platt’s Strong Inference paper is required reading for students enrolled in Biological Principles! (You may need to be logged in through the institute VPN to access the paper link.) Platt states the following methodology:

In its separate elements, strong inference is just the simple and old-fashioned method of inductive inference that goes back to Francis Bacon. The steps are familiar to every college student and are practiced, off and on, by every scientist. The difference comes in their systematic application. Strong inference consists of applying the following steps to every problem in science, formally and explicitly and regularly:

1) Devising alternative hypotheses;

2) Devising a crucial experiment (or several of them), with alternative possible outcomes, each of which will, as nearly as possible, exclude one or more of the hypotheses;

3) Carrying out the experiment so as to get a clean result;

1′) Recycling the procedure, making subhypotheses or sequential hypotheses to refine the possibilities that remain; and so on.

—Platt, 1964

Platt expounds on two critical points in this method:

- Chamberlin’s idea of multiple working hypotheses, to avoid attachment to a favored hypothesis and confirmation bias;

- experiments designed to eliminate (falsify) one or more alternative hypotheses.

These two essays by Platt (1964) and Chamberlin (1890, 1897, reprinted 1995) are not just classic but timeless in that they remain relevant and stimulate much discussion today and will do so into the future. They are well worth reading and re-reading every few years.

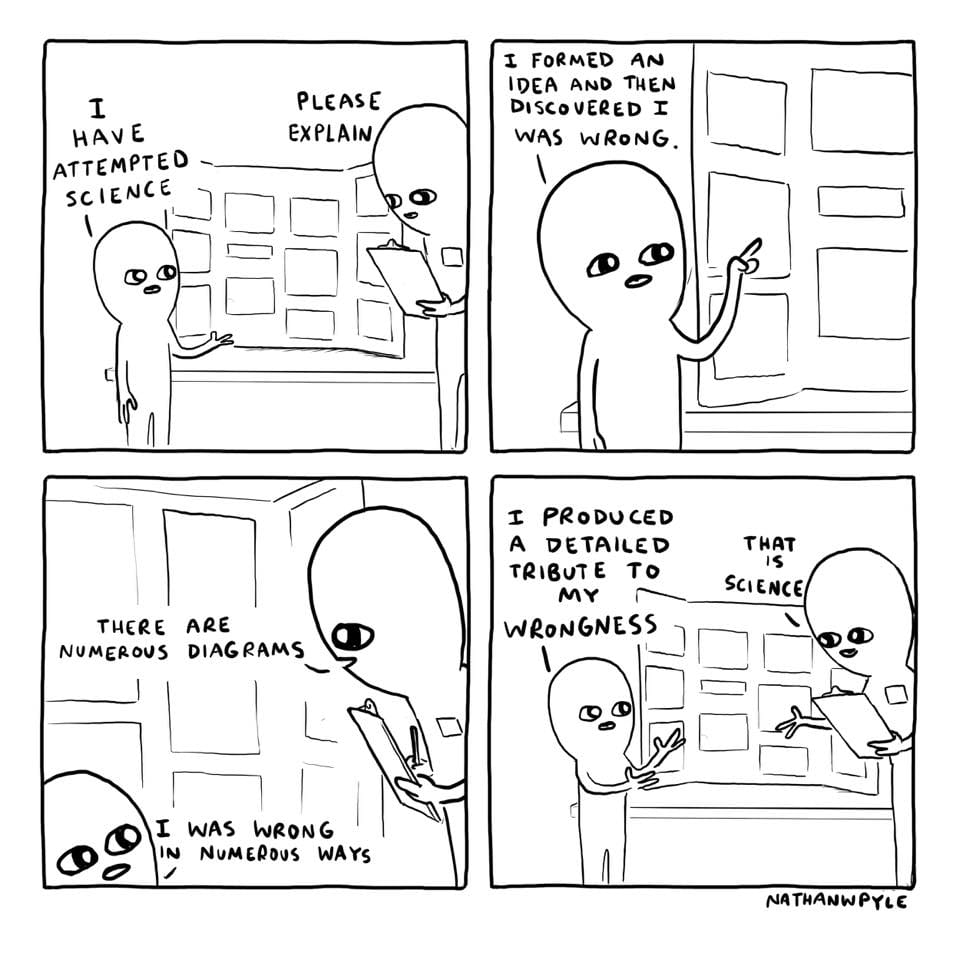

Here’s a fun video to illustrate the value of attempting to disprove your hypothesis:

Experimental Design

Well-designed experiments test hypotheses; they attempt to falsify (disprove or eliminate) as many hypotheses as possible. Typical experiments have one or more independent variables, some factor in the experiment that is set in varying amounts by the experimenter. Examples of independent variables include time or the amounts of a particular substance added to reactions or to cell cultures. Dependent variables are the outcomes that depend on the independent variables. Typically, the independent variables are plotted on the x-axis of a graph, and the dependent variables are plotted on the y-axis.

The valid interpretation of experiments requires proper controls. Positive controls are experiments with known outcomes. Their purpose is to make sure that the instruments and reagents are all working properly. If an experiment with an unknown produces negative results, but the positive control produces the expected results, then we can be confident that the negative results were not due to faulty instruments or reagents.

Conversely, negative controls are experiments that should produce negative or null results. They also guard against faulty instruments or contaminated reagents. An experiment with an unknown that produces a positive result is valid only if the negative control shows the expected negative result.

Questions for thought and discussion

Given that science is a way of knowing about the world around us (epistemology), what are the applications of strong inference outside the science laboratory?

What are the similarities and differences between strong inference and clinical diagnostics (see any episode of House, M.D.), or criminal investigation?

What are the limitations of strong inference and the scientific method?

Is it possible for the scientific method to definitively prove a hypothesis?

Credible Sources and how to find them

These days, when we have a question, we turn to the internet. Internet search engines like Google can link you to almost any content, and they even filter content based on your past searches, location, and preference settings. However, search engines do not vet content. Determination of whether content is credible is up to the end-user. We are also living in an era where misinformation can be mistaken for fact. How do we know what information to trust?

The process of scientific peer-review is one assurance that scientists place on the reporting of scientific results in scientific journals like Science, Nature, the Proceedings of the National Academy of Science (PNAS), and many hundreds of other journals. In peer review, research is read by anonymous reviewers who are experts in the subject. The reviewers provide feedback and commentary and ultimately provide the journal editor a recommendation to accept, accept with revisions, or decline for this journal. While this process is not flawless, it has a fairly high success rate in catching major issues and problems and improving the quality of the evidence.

In the rare situation when a study has passed through the sieve of peer review and is later found to be deeply flawed, the journal or the authors can choose to take the unusual action to retract the work. Retraction is infrequent but does happen in science, and it is reassuring to know that there are ways to flag problematic work that has slipped through the peer review process. A prominent example of a retracted study in biology was one linking the MMR vaccine to autism (https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2831678/). Some sectors of the public still have misconceptions about supposed linkages between autism and vaccination. The putative autism-vaccination connection is a classic case of how correlation does not imply causation, meaning that just because two events co-occur—the recommended childhood vaccination schedule and the onset of autism symptoms—does not mean that one caused the other.

With the rapid spread of SARS-CoV-2 and the Covid-19 pandemic, the demand for scientific information about the novel coronavirus SARS-CoV-2 outpaced the rate that journals can peer-review and publish scientific research. Many research articles for SARS-CoV-2 have therefore been released as preprints, meaning they have been submitted to a journal for peer-review and eventual publication, but the authors wanted to release the information for immediate use.

In the media, journalists use published and preprint articles, press releases, interviews, and public records requests, and other sources to find source information. They cite their sources when possible and are responsible to their editors for the quality and authenticity of their reporting. Some media sources have better track records than others for unbiased reporting.

Websites and social media posts are places where anyone can post anything and make claims that are or are not supported by evidence. As the end-users, our job is to find sources supported by evidence, cited ethically, and otherwise credibly presented. We have the responsibility to notice whether an organization is funded or motivated in ways that might generate bias in their content. We have the obligation to cross reference ideas from unvetted sources to help us establish how believable or how credible the source of information is. Science is based in evidence, and we will work this semester to identify and interpret scientific evidence.

UN Sustainable Development Goal (SDG) 4: Quality Education – Distinguishing between strong and weak arguments or inferences is important for promoting critical thinking skills and developing evidence-based decision-making. These skills are critical for developing sustainably and engaging in global citizenship.

References:

Platt, John R. “Strong Inference.” In Science, New Series, Vol. 146, No. 3642 (Oct. 16, 1964), pp. 347-353 (html reprint)

Raup, David C. and Thomas C. Chamberlin. “The Method of Multiple Working Hypotheses.” In The Journal of Geology, Vol. 103, No. 3 (May, 1995), pp. 349-354 (pdf) – originally published in 1897

I highly recommend reading Science Isn’t Broken – It’s Just Hell of a Lot Harder than We Give It Credit for by Christie Aschwanden. This is a FiveThirtyEight feature on reproducibility of scientific studies, scientific misconduct, statistics, human fallibility, and the nature of scientific investigations. Has interactive analyze-it-yourself demo for readers to investigate whether the economy does better with Democrats or Republicans in power.

http://fivethirtyeight.com/features/science-isnt-broken/